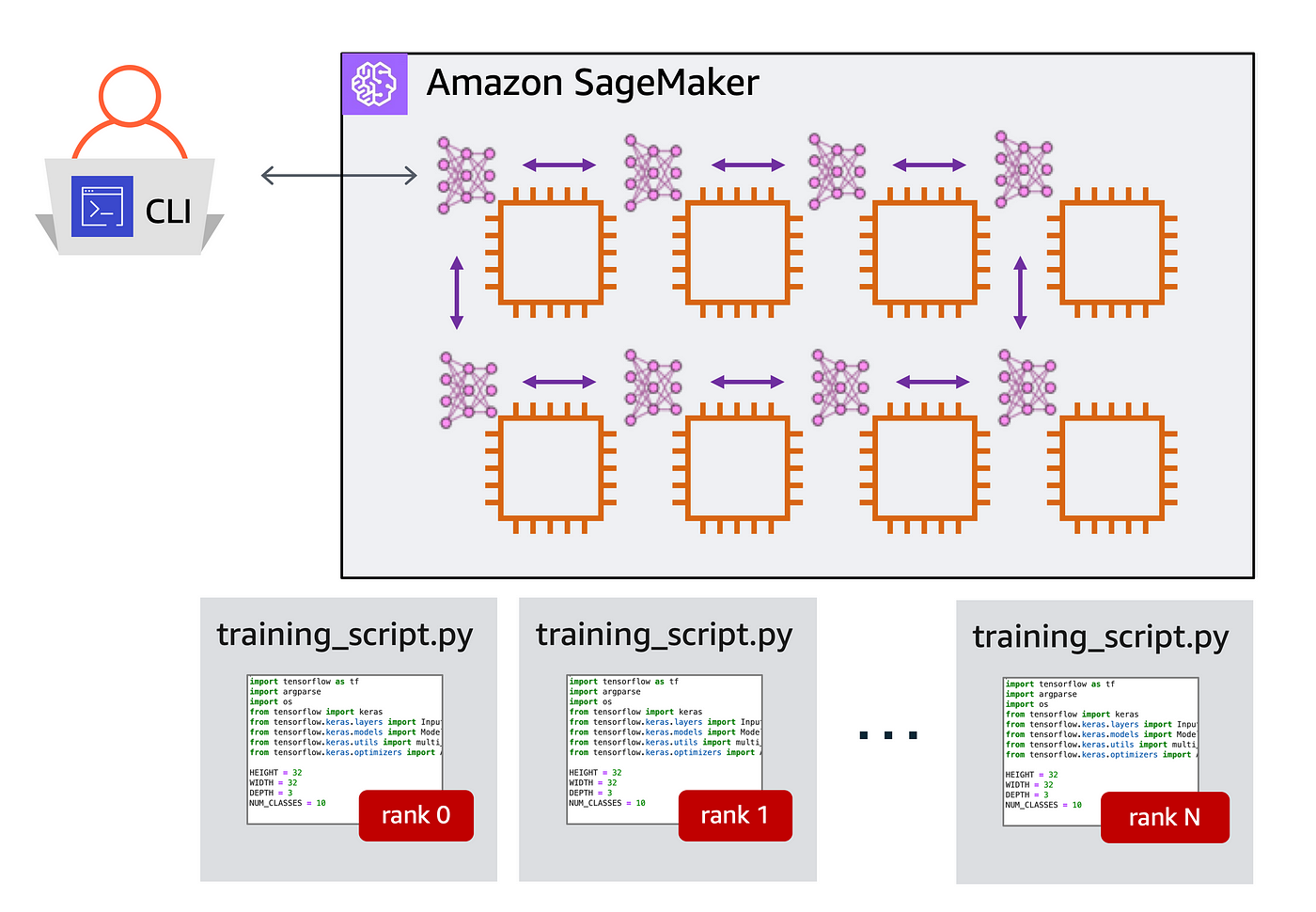

Multi-GPU and distributed training using Horovod in Amazon SageMaker Pipe mode | AWS Machine Learning Blog

François Chollet on Twitter: "Tweetorial: high-performance multi-GPU training with Keras. The only thing you need to do to turn single-device code into multi-device code is to place your model construction function under

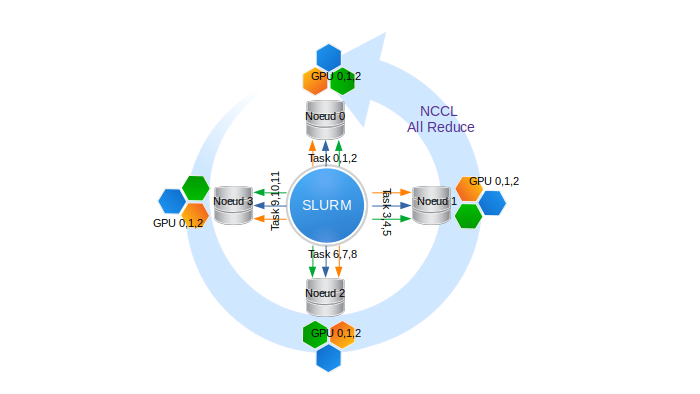

Multi-GPU and distributed training using Horovod in Amazon SageMaker Pipe mode | AWS Machine Learning Blog

A quick guide to distributed training with TensorFlow and Horovod on Amazon SageMaker | by Shashank Prasanna | Towards Data Science