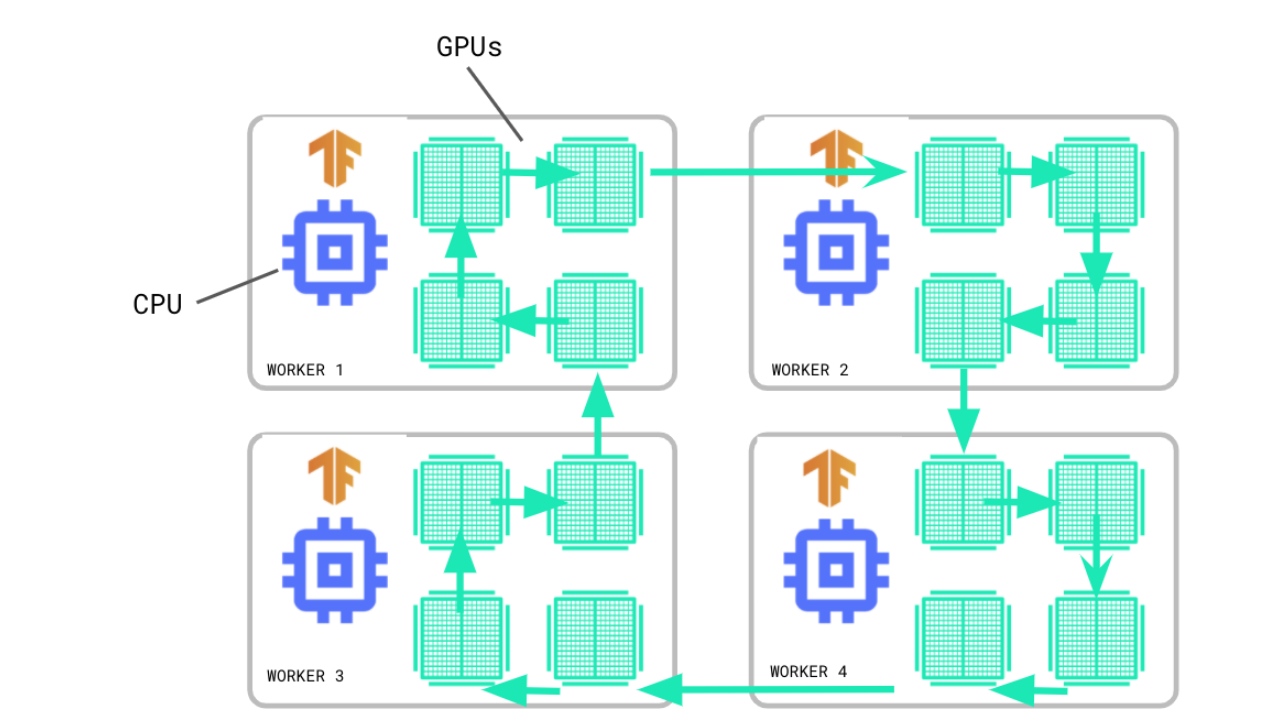

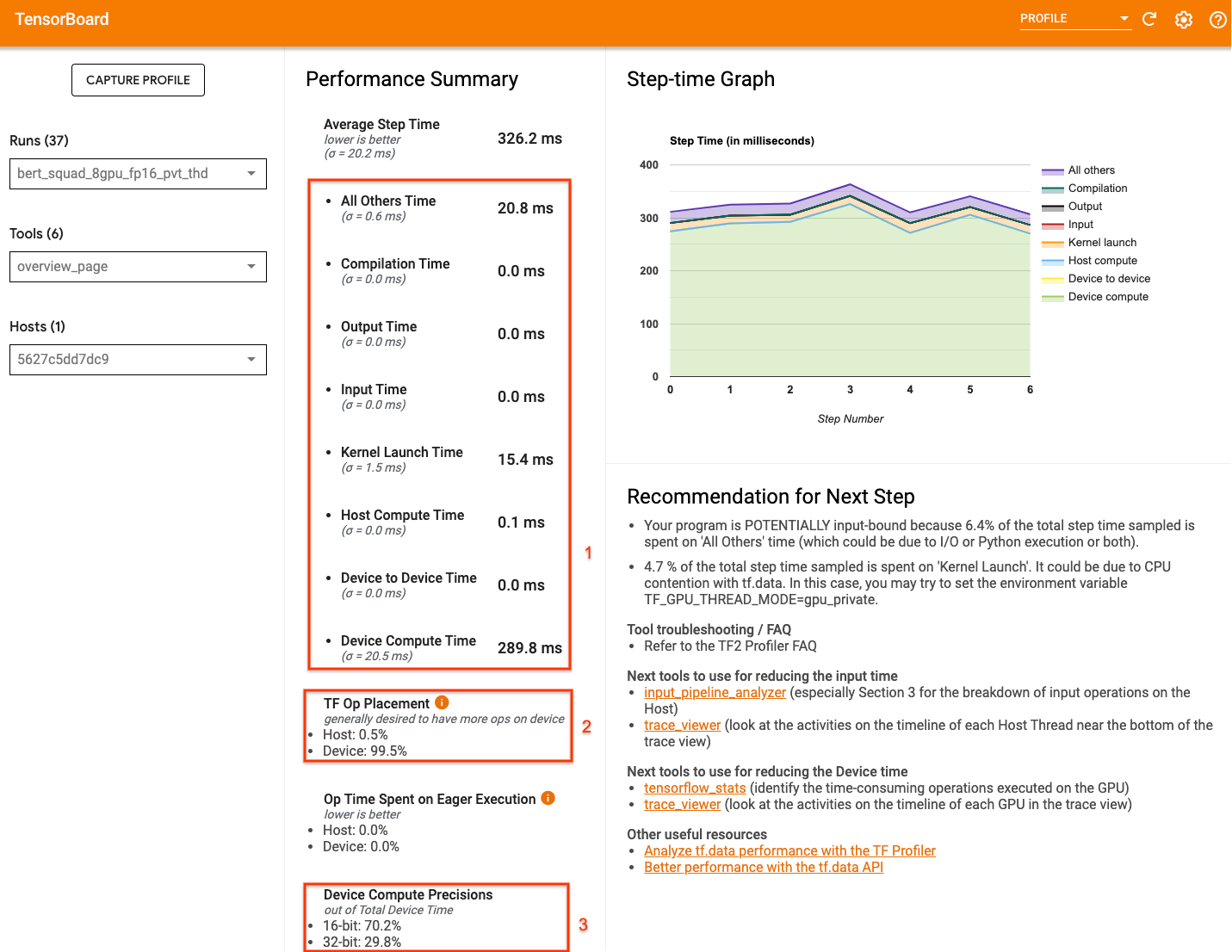

GitHub - sayakpaul/tf.keras-Distributed-Training: Shows how to use MirroredStrategy to distribute training workloads when using the regular fit and compile paradigm in tf.keras.

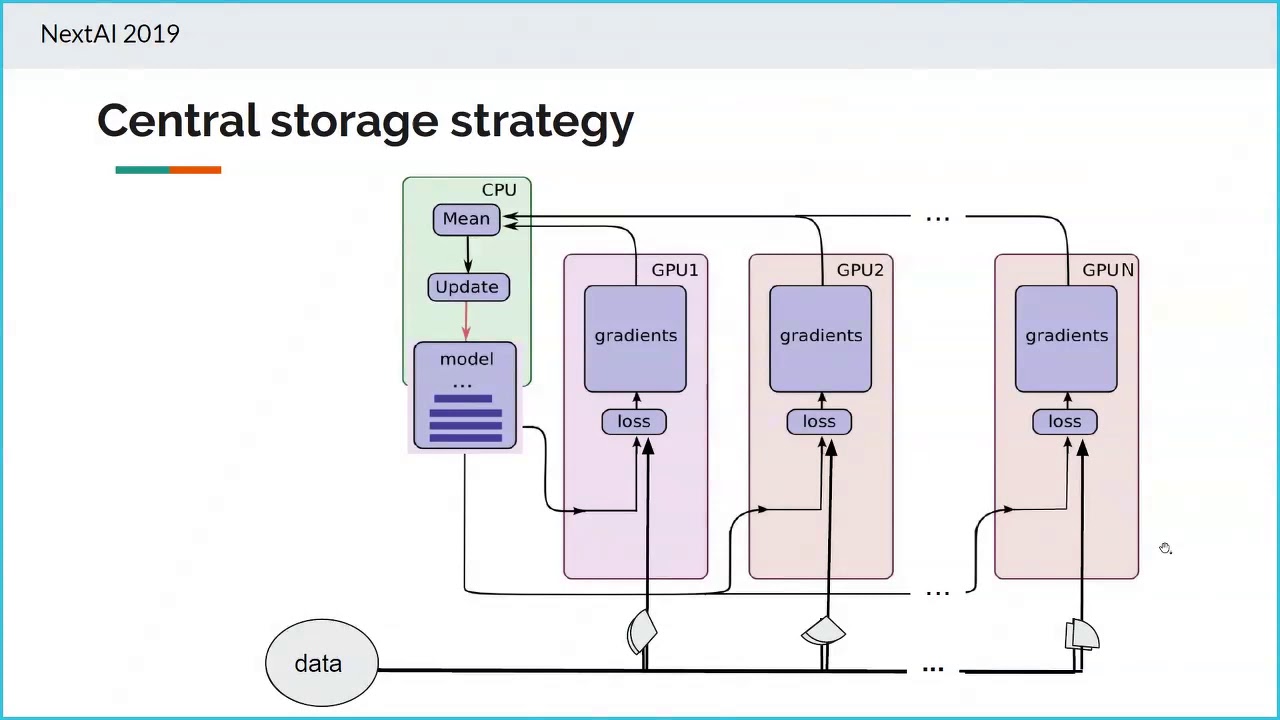

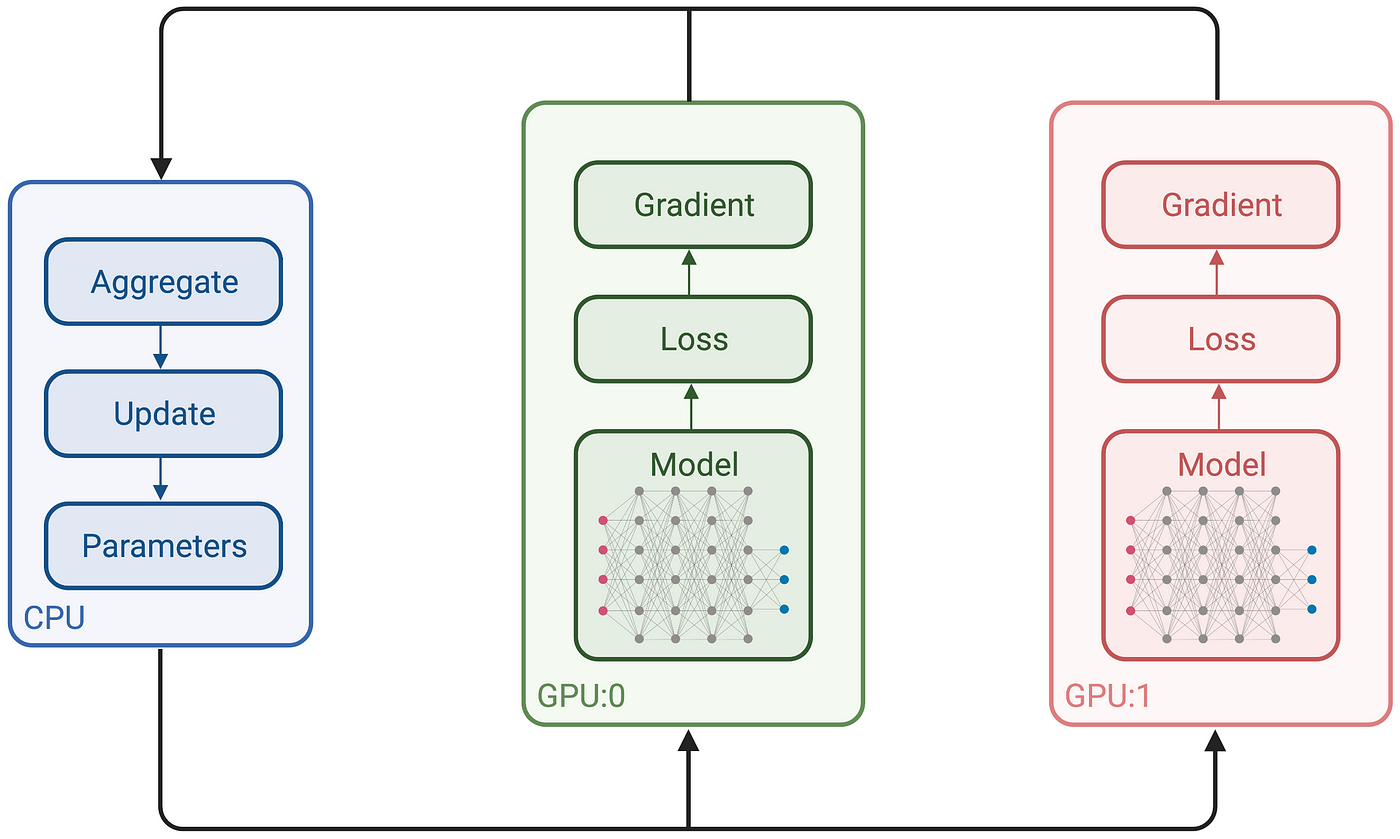

Multi-GPU training. Example using two GPUs, but scalable to all GPUs... | Download Scientific Diagram