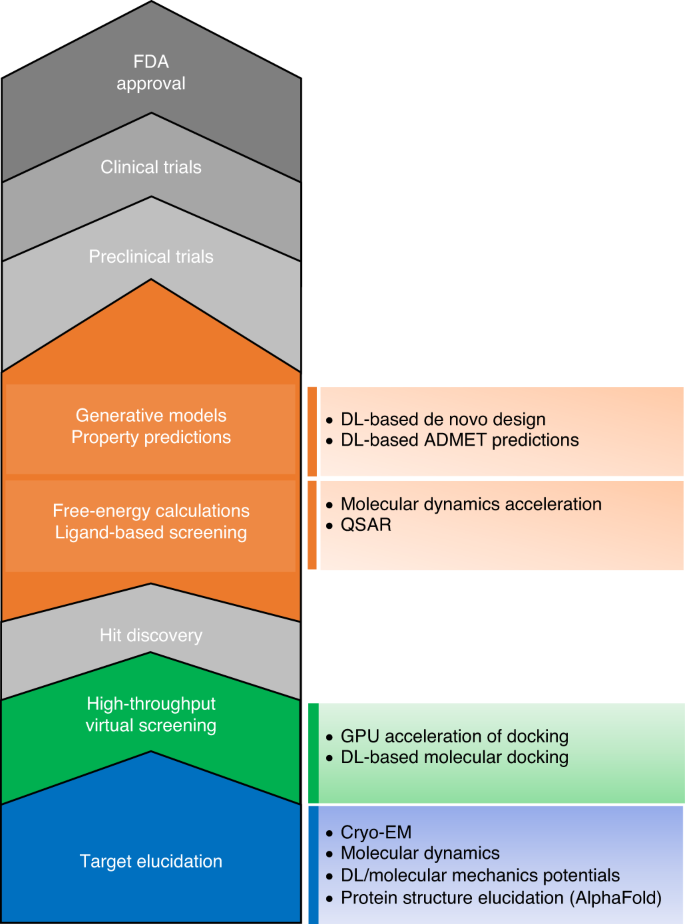

The transformational role of GPU computing and deep learning in drug discovery | Nature Machine Intelligence

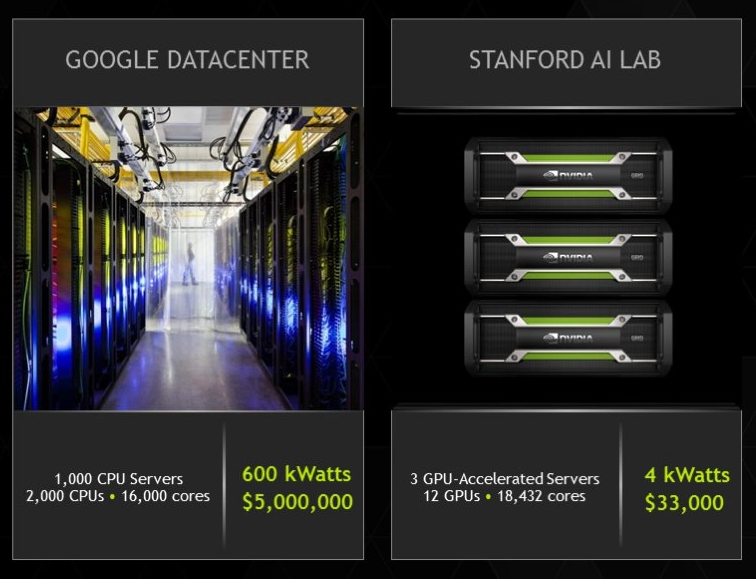

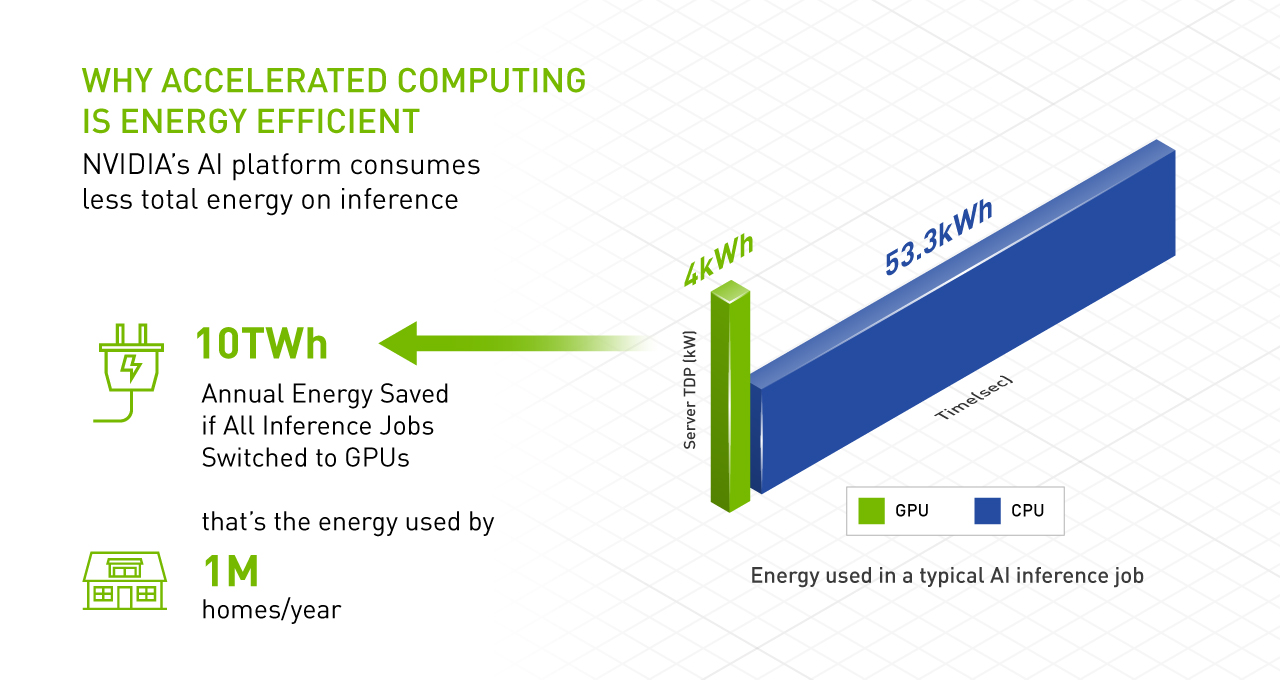

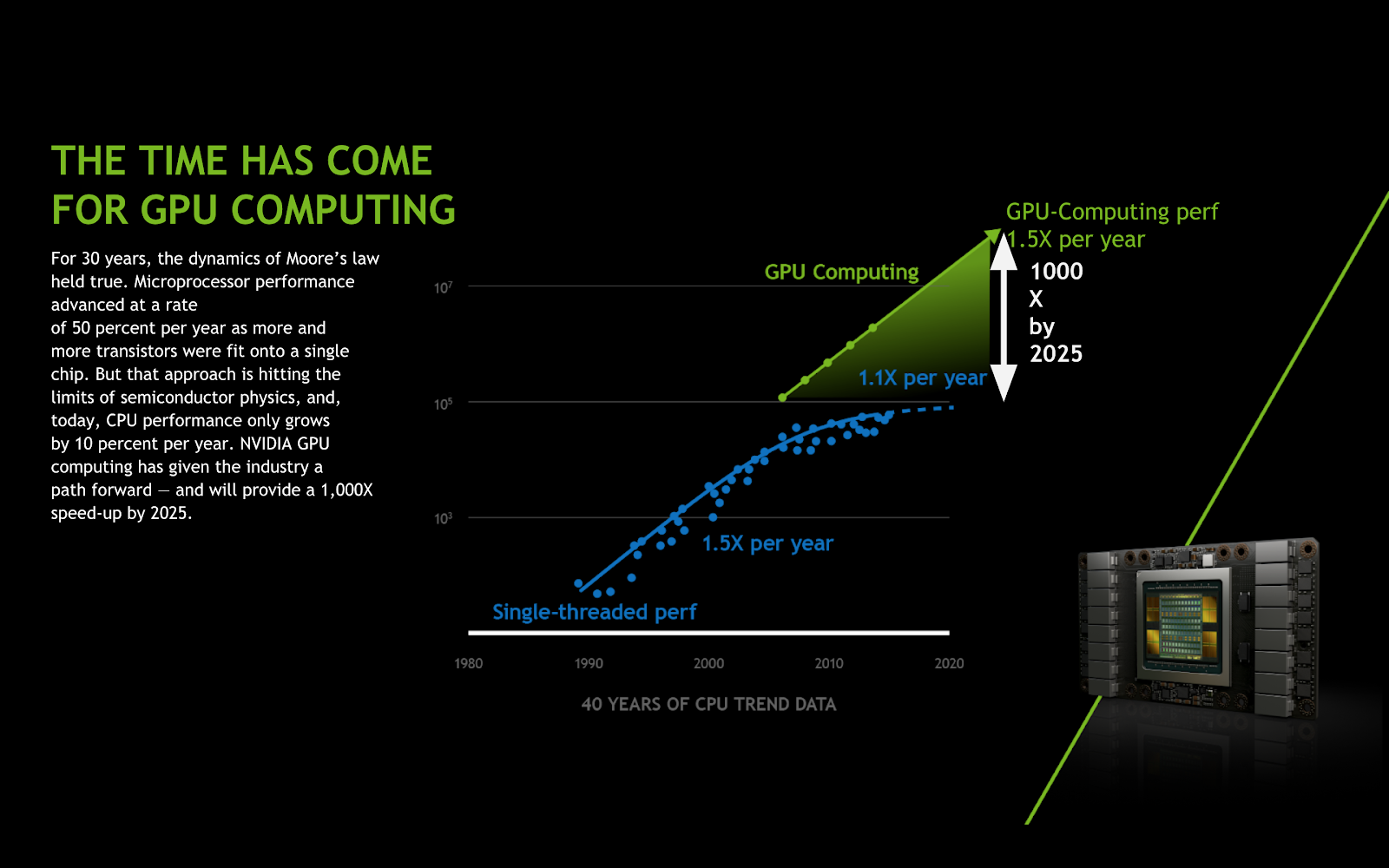

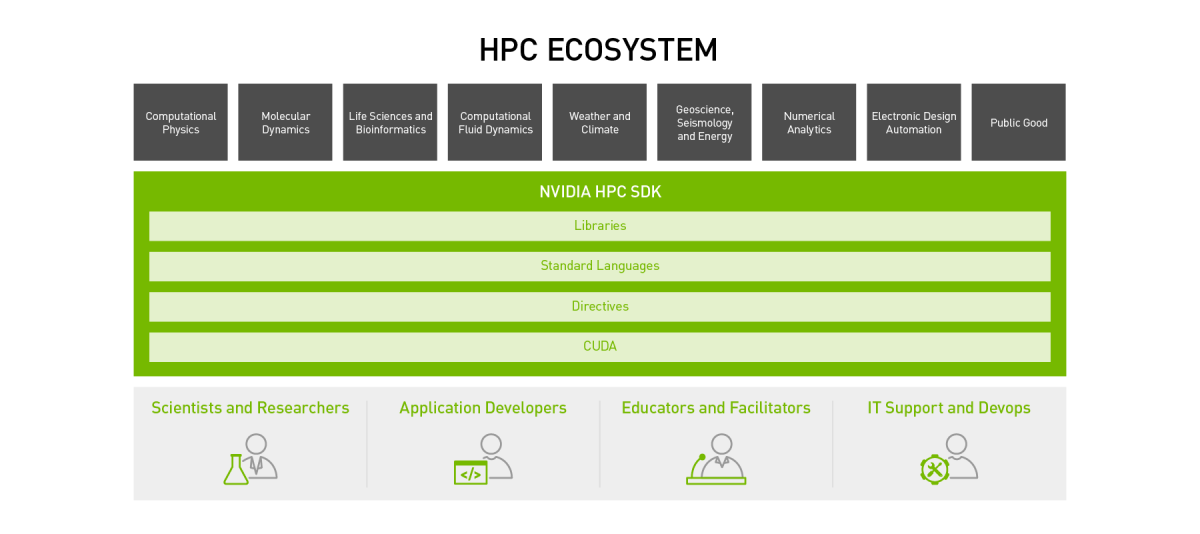

GPU-Accelerated Computing. I don't believe when someone says … | by Crypto1 | Analytics Vidhya | Medium

NVIDIA AI on Twitter: "Build GPU-accelerated #AI and #datascience applications with CUDA Python. @NVIDIA Deep Learning Institute is offering hands-on workshops on the Fundamentals of Accelerated Computing. Register today: https://t.co/XRmiCcJK1N #NVDLI ...

GPU-Accelerated Computing with Python | Information Technology @ UIC | University of Illinois Chicago

What is the Graphics Processing Unit Accelerated Computing? | by Successive Technologies | Successive Technologies | Medium